MTN South Africa has once again emerged as the country’s top-performing mobile network, securing the highest score in the Q2 2025 MyBroadband Network Quality…

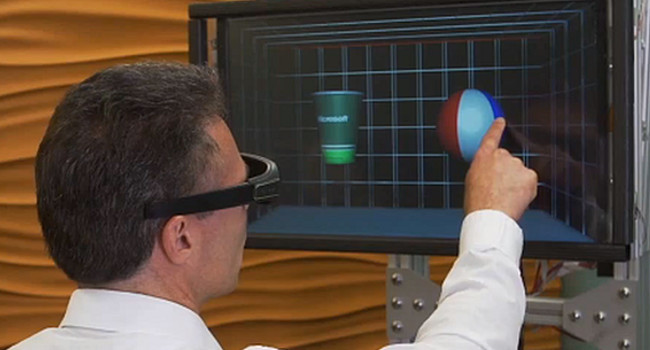

Microsoft makes touchy feely 3D touchscreen

A group of Microsoft researchers have created a stereo-vision 3-D monitor on a robot arm to augment touchscreen interactions. Their aim, they say, is to do “for the sense of touch what computer graphics does for vision.”

Basically it’s an LCD flat panel that’s attached to a robotic arm that pushes, retracts and moves the screen when touched. The screen uses sensors to recognize the objects on the screen and when touched, uses light force to create the illusion of the object having both weight, shape, size and depth. So basically, you poke the screen while it pushes back.

If this is done correctly and visuals are constantly updated so that they “correspond to your finger’s depth perception, this is enough for your brain to accept the virtual world as real,” says Micheal Pahud who is a senior researcher of the device.

People who have so far poked at this screen, were able to “feel the shape of a virtual cup and ball,” for example, at the same time viewing them using “special glasses to get a stereo-vision effect.”

Where does this leave gamers? Well, Microsoft says the device could have medical uses as well as for gaming. On the saving lives side of things, the screen could help doctors explore body scans. Doctors could use this to help find tumors for example. “You can have different responses for when you touch soft tissue versus hard tissue, which makes for a very rich experience.”

We can already see Surgery Simulator die hards’ imaginations running amok. For now, this kind of technology would have to stick with search and find games though. As One medical expert pointed out that although it holds potential promise, it’s still “not yet responsive enough to be reliable,” to be used for touch-based feedback in robotic surgery.

Another obstacle is the fact that the objects used would still be limited. The squares and cylinders that have been represented were all pretty basic and had smooth textures so it was easy for the sensors to detects and execute. Though, if used with detailed areas in the real world “it would have to respond to rapidly changing shapes.”

Microsoft obviously isn’t the only company experimenting with 3D touch-based screens or “haptic technology,” others such as Senseg, for example, uses “an ultra-low electrical current to charge very thin durable coatings.” Users feel different textures (created by a small electrical current) as they drag their fingers across a touch screen. Tactus created a transparent screen where buttons “rise up from the surface on demand.”

These kinds of technology are still in their prototype phases but shows great potential. “A patient could be in another country or hospital and the doctor could feel their glands or abdomen from a distance.” Or even creating touchscreens for the blind. Just think about blind people being able to get braille e-books or braille gaming for instance.

No word on the launch date from Microsoft yet.

Image via Xerrys